Distributed AI Compute Infrastructure

Tensormesh delivers enterprise-grade GPU clusters, distributed training pipelines, and inference optimization platforms — purpose-built for the next generation of machine learning at scale.

Everything You Need to Scale AI

From GPU provisioning to inference optimization — Tensormesh covers the full ML infrastructure stack.

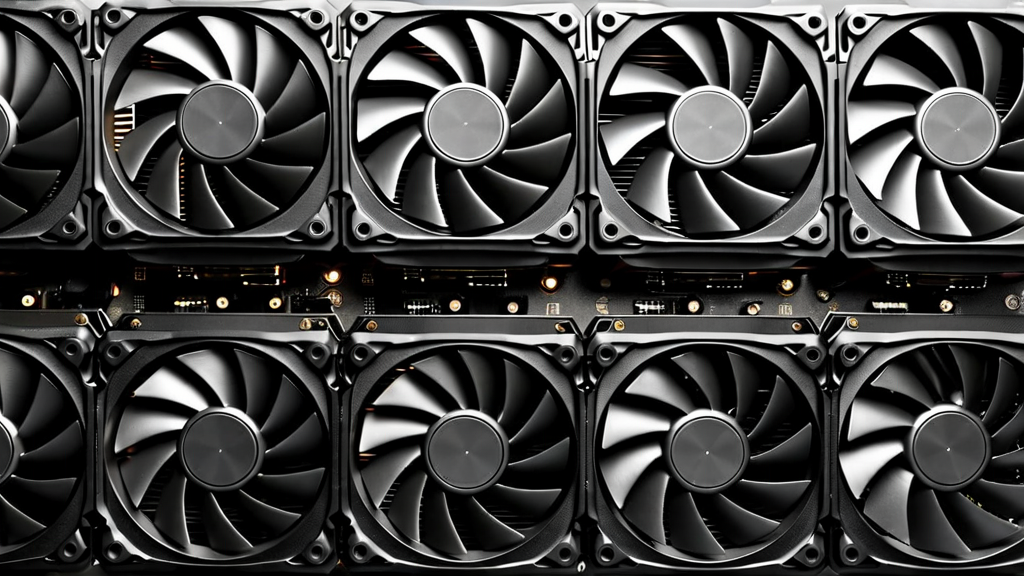

GPU Cluster Orchestration

Dynamically provision and schedule GPU clusters across H100, A100, and V100 nodes. Auto-scaling responds to your workload in real time.

Distributed Training Mesh

Run large-scale distributed training with tensor parallelism, pipeline parallelism, and data parallelism — optimized for LLM and vision models.

Inference Optimization

Reduce latency by up to 60% with quantization-aware serving, KV cache management, and continuous batching for production LLM inference.

Elastic Auto-Scaling

Scale from single-node experiments to 1,000-GPU training runs without reconfiguration. Pay only for what you use — billed by the GPU-hour.

Unified API Layer

One API for all compute primitives — job scheduling, checkpoint management, model serving, and observability. Compatible with PyTorch, JAX, and TensorFlow.

Enterprise Security

SOC 2 Type II certified. Private VPC deployment, end-to-end encryption, and fine-grained IAM policies protect your models and data at rest and in transit.

From Experiment to Production in Hours

Tensormesh removes infrastructure friction so your team can focus on model quality, not cluster management.

Connect Your Repo

Link your GitHub, GitLab, or Hugging Face repository. Tensormesh auto-detects your framework and model architecture.

Configure & Launch

Define your compute requirements via YAML or our web UI. Launch training or inference jobs with a single command or API call.

Monitor & Scale

Real-time dashboards track GPU utilization, loss curves, throughput, and cost. Scale or stop jobs dynamically as your experiment evolves.

From the Tensormesh Blog

Deep dives into AI infrastructure, distributed computing, and enterprise ML best practices.

Tensormesh Raises $5.2M Seed Round to Scale Distributed AI Compute

We are thrilled to announce our $5.2M seed round led by Laude Ventures to accelerate the buildout of our distributed GPU infrastructure platform.

Read more →

Building High-Performance GPU Clusters for Enterprise ML Training

A practical guide to designing, networking, and operating multi-node GPU clusters optimized for large-scale deep learning workloads.

Read more →

Optimizing LLM Inference at Scale: Techniques and Best Practices

Explore quantization, KV caching, speculative decoding, and continuous batching to reduce latency and maximize GPU utilization in production.

Read more →Ready to Scale Your AI Infrastructure?

Join forward-thinking ML teams building the next generation of AI on Tensormesh. Schedule a demo or start your free trial today.